Reviews

ZADORA Grzegorz, MARTYNA Agnieszka, MICHALSKA Aleksandra, WŁASIUK Patryk

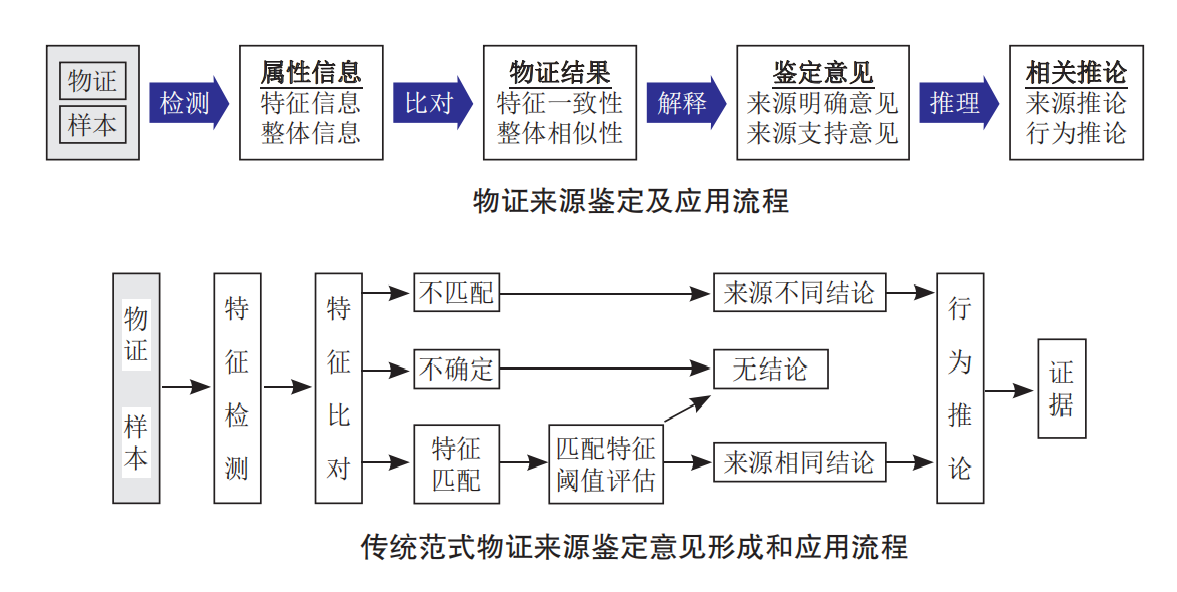

The increasing complexity from new forms of crime and the need by those who administer justice for higher standards of scientific work require the development of new approaches for measuring the evidential value of physicochemical data obtained by application of numerous analytical Methods during the analysis of various kinds of trace evidence. The Methods used for evaluation of these data should reveal the role of the forensic experts in the administration of justice. This means that such data (evidence) should be evaluated in the context of two competing propositions H1 and H2 formulated by two opposite sides in the legal proceeding, i.e. prosecution and defence. Bayesian models have been proposed for the evaluation of evidence in such contexts. This paper describes the principle of likelihood ratio (LR) approach for evaluation of physicochemical data in so-called comparison and classification (in fact, classification is also based on comparison) problems. The LR models allow including all of important factors in one calculation run where evidential value of physicochemical data is to evaluate. These factors are the similarity of observed physicochemical data in compared samples, the rarity of determined physicochemical data in relevant population, and the possible sources of errors (within- and inter-sample variability). The LR models, as statistical tools, can be only proposed for databases described by a few variables. However, most of physicochemical data are highly dimensional data (e.g. spectra). Therefore, it is necessary to apply Methods of dimensionality reduction like graphical models or suitable chemometrics’ tools, with examples presented in the paper. The LR models should be always treated as a supportive (not the decisive!) tool and their Results subjected to critical analysis. In other words, the statistical Methods do not deliver the absolute truth as the levels of possible false answers are an integral part of these Methods, in the same way like uncertainty related to the applied analytical techniques. Therefore, sensitivity convergence, an equivalent of the validation process for analytical Methods, should be conducted in order to determine their performance. Thus, how to validate LR models is addressed in this paper by the example of application of Empirical Cross Entropy approach. There is the so-called source-level evaluation for physicochemical data as it helps to answer the question whether the compared samples are originated from the same object. Usually, the fact finders (judge, prosecutor, or police) are interested in recognizing the activity that made transferred and persisted of the recovered microtraces (which reveal similarity to control sample) from body, clothes or shoes. This is the so-called activity-level analysis, also discussed in the paper.